- R Web Scraping Table

- R Crawler Tutorial

- R Web Scraping Package

- R Web Scraping

- Automated Web Scraping Tool

- Botsol google maps crawler crack.

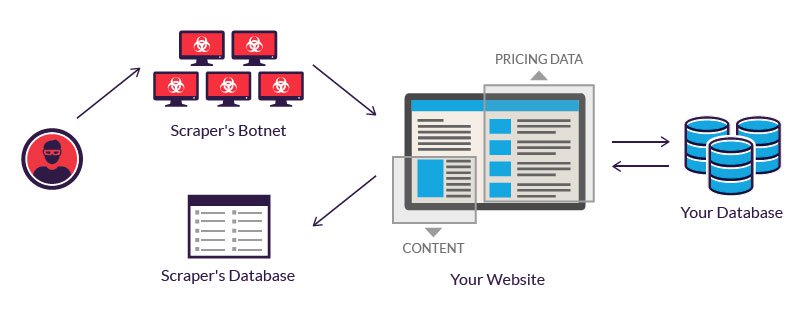

- Web scraping is the process of collecting the data from the World Wide Web and transforming it into a structured format. Typically web scraping is referred to an automated procedure, even though formally it includes a manual human scraping. We distinguish several techniques of web scraping.

Explore web scraping in R with rvest with a real-life project: extract, preprocess and analyze Trustpilot reviews with tidyverse and tidyquant, and much more! Trustpilot has become a popular website for customers to review businesses and services. Web Scraping in R A relatively new form of data collection is to scrape data of the internet. This involves simply directing R (or Python) to a specify web site (technically, a URL) and then collect certain elements of one or more web pages.

If you’re trying to crawl a whole website or dynamically follow links on web pages, R is probably not the tool you want to use (although it is possible to do fairly extensive web scraping in R if you’re really determined; see RSelenium for one place to start). However, if you know the urls of the pages you want to collect, R is a viable option, particularly if you’re not already familiar with another programming language.

You’ll want to make sure that the information you want from the page will be in the file you download. See Inspecting Page Structure. If the data you want isn’t in the page source, then the options below aren’t what you need.

Below are a few different scenarios to get you started downloading. Depending on your particular project, a different package may be more appropriate.

Simple Downloads

Let’s assume you already have a list of the urls you need to download, and there are no complications (e.g. you don’t need to use cookies, the site you’re collecting from doesn’t redirect you to a different page, etc.). This is a good place to start, and then if you run into problems, you can always move on to more complicated solutions. If you’re just downloading the content of a web page given a url, then you can use the downloader package, which is probably the simplest of available options.

HTML files

Let’s assume you have a list of urls that point to html files – normal web pages, not pdf or some other file type. You probably have these urls stored in a file somewhere, and you can simply read them into R.

Let’s download each file and save the result locally (in a folder called collected in the current working directory, but you can change this). Let’s name each file with the UniqueID from the url, plus the .html extension. We’ll use a regular expression to get that ID out of the url (using the stringr package).

To be respectful of the site we’re collecting from, let’s pause for 2 seconds between each page we’re downloading.

Make sure to create a folder called collected in the current working directory before trying the code, or else change the path to where the files are downloading.

You’ll see output from the downloading process as it progresses, and in the end you’ll have new files in the collected folder.

PDFs

You can also download a pdf file using the same command as when downloading an html file (although you’d generally want to know which file type you were getting ahead of time).

Here are some urls that look like the above, but they point to pdf files instead.

We can collect them the same way:

If you’re collecting pdfs, then getting data out of them will probably require you to convert them to text first (which can be a bitm messy and imprecise). See How do I convert PDF files to text? to get started.

Downloading with some complications

Sometimes downloading a page is not as simple as just retrieving the html. Here are a few things that might happen, and how you can deal with them.

For these special cases, you may need to turn to the RCurl package, which makes use of the command line tools curl and libcurl. Instead of downloading a page directly to file like downloader, RCurl’s getURL function return the page source directly to you. You then have to decide whether to save it to file or do something else with it. Note that unlike the downloader library, RCurl will not handle https directly without additional libraries more info, so the urls in the above example won’t work well with RCurl.

Page Redirects

The downloader package will take care of at least simple page redirects for you. For example, someone thought that http://www.loser.com should redirect to Kayne West’s Wikipedia page. Downloader will handle this fine:

If you look at tmp.txt, you’ll see the full source of the wikipedia page.

However, you may run into a case of a more complicated redirect that download doesn’t handle smoothly.

If you use RCurl to try to collect a page that is being redirected, instead of automatically getting the page you’re redirected to, you’ll instead see the immediate result. For example:

will return

to follow the redirect, you need to explicitly tell RCurl to do so.

Cookies

See this example for a situation where you may need to use RCurl to handle cookies to collect from a web site.

Posting a Form

If you need to fill out a form and get the page that results from submitting the form, look at the postForm function in the RCurl library.

Other Issues

A number of different things can go wrong when you’re trying to download a large set of web pages. Your internet connection can go down, the page you’re trying to get can return errors, your request to a web server can time out, etc. When you’re just starting out, the most important thing is to just realize that these types of problems might come up and be on the lookout for them. If you progress to trying to build more robust downloading scripts, you can do it in R, but it may be time to move to a different programming language.

Processing HTML Pages

Now that you have your html pages downloaded, how are you going to get data out of them?

See Inspecting Page Structure for some basics on looking at the structure of the html source. The Inspect Element functionality in the browsers can give you the XPath to a specific element on the page, which can be useful if you want to use the XPath approach below.

To read your html from file into a variable in R (which you need to do if you’re going to process it), try something like:

Regular Expressions

If the web page is well structured, and the information you want is surrounded by html tags that make it easy to identify where on the page the data is, you may want to write regular expressions to extract the data. Learning how to write regular expressions is an endeavor in and of itself, but once you know how to use them, you’ll be amazed at how much easier it is to work with any kind of text data. In R, you may find the stringr package useful (some more details).

Here’s one simple example, using the files collected above in the Simple Downloads section. In each file, some of the key information is available in clearly labeled tags. A small sample:

If we have the text of one of the pages in a variable called mypage (we’ve read it into R and stored it in this variable), then we could extract some of this data using regular expressions:

XPath

The XML package will let you use XPath to extract data from your pages. Using the same example as above, if we wanted to get the same pieces of information using XPath, we could do

Note that we specify the tag names using lowercase even though the tags in the source are in all uppercase. The [[1]] is there because we want the first node that meets the criteria, and then we use xmlValue to get the value out of that node. If there were multiple pieces of information on the page with the same tag, then we’d need to loop through the nodes to get the values out.

This tutorial has good examples and information on using the XML package in this way.

rvest package

Yet another package that lets you select elements from an html file is rvest. rvest has some nice functions for grabbing entire tables from web pages. It will also allow you to navigate a web site as if you were in a browser (following links and such). If you’re just extracting data from simple nodes/tags and not using this additional functionality, whether you use this package, XML, or regular expressions depends mostly on preference.

Again, using the same example html file before, loaded into a variable called mypage:

You can also use XPath expressions with the rvest package.

Want to scrape the web with R? You’re at the right place!

We will teach you from ground up on how to scrape the web with R, and will take you through fundamentals of web scraping (with examples from R).

Throughout this article, we won’t just take you through prominent R libraries like rvest and Rcrawler, but will also walk you through how to scrape information with barebones code.

Overall, here’s what you are going to learn:

- R web scraping fundamentals

- Handling different web scraping scenarios with R

- Leveraging rvest and Rcrawler to carry out web scraping

Let’s start the journey!

Introduction

The first step towards scraping the web with R requires you to understand HTML and web scraping fundamentals. You’ll learn how to get browsers to display the source code, then you will develop the logic of markup languages which sets you on the path to scrape that information. And, above all - you’ll master the vocabulary you need to scrape data with R.

We would be looking at the following basics that’ll help you scrape R:

- HTML Basics

- Browser presentation

- And Parsing HTML data in R

So, let’s get into it.

HTML Basics

HTML is behind everything on the web. Our goal here is to briefly understand how Syntax rules, browser presentation, tags and attributes help us learn how to parse HTML and scrape the web for the information we need.

Browser Presentation

Before we scrape anything using R we need to know the underlying structure of a webpage. And the first thing you notice, is what you see when you open a webpage, isn’t the HTML document. It’s rather how an underlying HTML code is represented. You can basically open any HTML document using a text editor like notepad.

HTML tells a browser how to show a webpage, what goes into a headline, what goes into a text, etc. The underlying marked up structure is what we need to understand to actually scrape it.

For example, here’s what ScrapingBee.com looks like when you see it in a browser.

And, here’s what the underlying HTML looks like for it

Looking at this source code might seem like a lot of information to digest at once, let alone scrape it! But don’t worry. The next section exactly shows how to see this information better.

HTML elements and tags

If you carefully checked the raw HTML of ScrapingBee.com earlier, you would notice something like <title>...</title>, <body>...</body etc. Those are tags that HTML uses, and each of those tags have their own unique property. For example <title> tag helps a browser render the title of a web page, similarly <body> tag defines the body of an HTML document.

Once you understand those tags, that raw HTML would start talking to you and you’d already start to get the feeling of how you would be scraping web using R. All you need to take away form this section is that a page is structured with the help of HTML tags, and while scraping knowing these tags can help you locate and extract the information easily.

Parsing a webpage using R

With what we know, let’s use R to scrape an HTML webpage and see what we get. Keep in mind, we only know about HTML page structures so far, we know what RAW HTML looks like. That’s why, with the code, we will simply scrape a webpage and get the raw HTML. It is the first step towards scraping the web as well.

Earlier in this post, I mentioned that we can even use a text editor to open an HTML document. And in the code below, we will parse HTML in the same way we would parse a text document and read it with R.

I want to scrape the HTML code of ScrapingBee.com and see how it looks. We will use readLines() to map every line of the HTML document and create a flat representation of it.

Now, when you see what flat_html looks like, you should see something like this in your R Console:

The whole output would be a hundred pages so I’ve trimmed it for you. But, here’s something you can do to have some fun before I take you further towards scraping web with R:

- Scrape www.google.com and try to make sense of the information you received

- Scrape a very simple web page like https://www.york.ac.uk/teaching/cws/wws/webpage1.html and see what you get

Remember, scraping is only fun if you experiment with it. So, as we move forward with the blog post, I’d love it if you try out each and every example as you go through them and bring your own twist. Share in comments if you found something interesting or feel stuck somewhere.

While our output above looks great, it still is something that doesn’t closely reflect an HTML document. In HTML we have a document hierarchy of tags which looks something like

But clearly, our output from readLines() discarded the markup structure/hierarchies of HTML. Given that, I just wanted to give you a barebones look at scraping, this code looks like a good illustration.

However, in reality, our code is a lot more complicated. But fortunately, we have a lot of libraries that simplify web scraping in R for us. We will go through four of these libraries in later sections.

First, we need to go through different scraping situations that you’ll frequently encounter when you scrape data through R.

Common web scraping scenarios with R

Access web data using R over FTP

FTP is one of the ways to access data over the web. And with the help of CRAN FTP servers, I’ll show you how you can request data over FTP with just a few lines of code. Overall, the whole process is:

- Save ftp URL

- Save names of files from the URL into an R object

- Save files onto your local directory

Let’s get started now. The URL that we are trying to get data from is ftp://cran.r-project.org/pub/R/web/packages/BayesMixSurv/.

Let’s check the name of the files we received with get_files

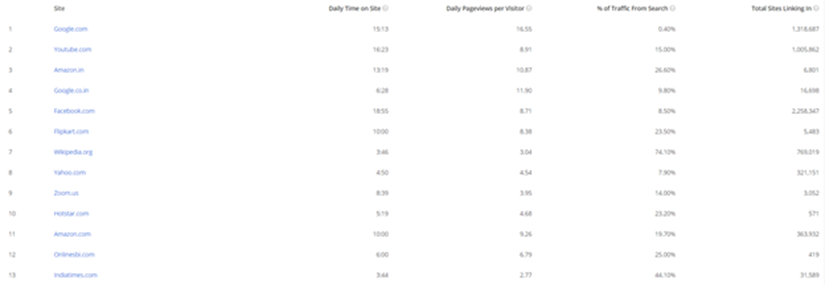

R Web Scraping Table

Looking at the string above can you see what the file names are?

The screenshot from the URL shows real file names

It turns out that when you download those file names you get carriage return representations too. And it is pretty easy to solve this issue. In the code below, I used str_split() and str_extract_all() to get the HTML file names of interest.

Let’s print the file names to see what we have now:

extracted_html_filenames

Great! So, we now have a list of HTML files that we want to access. In our case, it was only one HTML file.

Now, all we have to do is to write a function that stores them in a folder and a function that downloads HTML docs in that folder from the web.

We are almost there now! All we now have to do is to download these files to a specified folder in your local drive. Save those files in a folder called scrapignbee_html. To do so, use GetCurlHandle().

After that, we’ll use plyr package’s l_ply() function.

And, we are done!

I can see that on my local drive I have a folder named scrapingbee_html, where I have inde.html file stored. But, if you don’t want to manually go and check the scraped content, use this command to retrieve a list of HTMLs downloaded:

That was via FTP, but what about HTML retrieving specific data from a webpage? That’s what our next section covers.

Scraping information from Wikipedia using R

In this section, I’ll show you how to retrieve information from Leonardo Da Vinci’s Wikipedia page https://en.wikipedia.org/wiki/Leonardo_da_Vinci.

Let’s take the basic steps to parse information:

Leonardo Da Vinci’s Wikipedia HTML has now been parsed and stored in parsed_wiki.

But, let’s say you wanted to see what text we were able to parse. A very simple way to do that would be:

By doing that, we have essentially parsed everything that exists within the <p> node. And since it is an XML node set, we can easily use subsetting rules to access different paragraphs. For example, let’s say we pick the 4th element on a random name. Here’s what you’ll see:

Reading text is fun, but let’s do something else - let’s get all links that exist on this page. We can easily do that by using getHTMLLinks() function:

Notice what you see above is a mix of actual links and links to files.

You can also see the total number of links on this page by using length() function:

I’ll throw in one more use case here which is to scrape tables off such HTML pages. And it is something that you’ll encounter quite frequently too for web scraping purposes. XML package in R offers a function named readHTMLTable() which makes our life so easy when it comes to scraping tables from HTML pages.

Leonardo’s Wikipedia page has no HTML though, so I will use a different page to show how we can scrape HTML from a webpage using R. Here’s the new URL:

As usual, we will read this URL:

If you look at the page you’ll disagree with the number “108”. For a closer inspection I’ll use name() function to get names of all 108 tables:

Our suspicion was right, there are too many “NULL” and only a few tables. I’ll now read data from one of those tables in R:

Here’s how this table looks in HTML

Awesome isn’t it? Imagine being able to access census, pricing, etc data over R and scraping it. Wouldn’t it be fun? That’s why I took a boring one, and kept the fun part for you. Try something much cooler than what I did. Here’s an example of table data that you can scrape https://en.wikipedia.org/wiki/United_States_Census

Let me know how it goes for you. But it usually isn’t that straightforward. We have forms and authentication that can block your R code from scraping. And that’s exactly what we are going to learn to get through here.

Handling HTML forms while scraping with R

Often we come across pages that aren’t that easy to scrape. Take a look at the Meteorological Service Singapore’s page (that lack of SSL though :O). Notice the dropdowns here

Imagine if you want to scrape information that you can only get upon clicking on the dropdowns. What would you do in that case?

Well, I’ll be jumping a few steps forward and will show you a preview of rvest package while scraping this page. Our goal here is to scrape data from 2016 to 2020.

Let’s check what type of data have been able to scrape. Here’s what our data frame looks like:

From the dataframe above, we can now easily generate URLs that provide direct access to data of our interest.

Now, we can download those files at scale using lappy().

Note: This is going to download a ton of data once you execute it.

Web scraping using Rvest

Inspired by libraries like BeautifulSoup, rvest is probably one of most popular packages in R that we use to scrape the web. While it is simple enough that it makes scraping with R look effortless, it is complex enough to enable any scraping operation.

Let’s see rvest in action now. I will scrape information from IMDB and we will scrape Sharknado (because it is the best movie in the world!) https://www.imdb.com/title/tt8031422/

Awesome movie, awesome cast! Let's find out what was the cast of this movie.

Awesome cast! Probably that’s why it was such a huge hit. Who knows.

Still, there are skeptics of Sharknado. I guess the rating would prove them wrong? Here’s how you extract ratings of Sharknado from IMDB

I still stand by my words. But I hope you get the point, right? See how easy it is for us to scrape information using rvest, while we were writing 10+ lines of code in much simpler scraping scenarios.

Next on our list is Rcrawler.

Web Scraping using Rcrawler

Rcrawler is another R package that helps us harvest information from the web. But unlike rvest, we use Rcrawler for network graph related scraping tasks a lot more. For example, if you wish to scrape a very large website, you might want to try Rcrawler in a bit more depth.

R Crawler Tutorial

Note: Rcrawler is more about crawling than scraping.

R Web Scraping Package

We will go back to Wikipedia and we will try to find the date of birth, date of death and other details of scientists.

Output looks like this:

R Web Scraping

And that’s it!

You pretty much know everything you need to get started with Web Scraping in R.

Try challenging yourself with interesting use cases and uncover challenges. Scraping the web with R can be really fun!

While this whole article tackles the main aspect of web scraping with R, it does not talk about web scraping without getting blocked.

If you want to learn how to do it, we have wrote this complete guide, and if you don't want to take care of this, you can always use our web scraping API.

Automated Web Scraping Tool

Happy scraping.